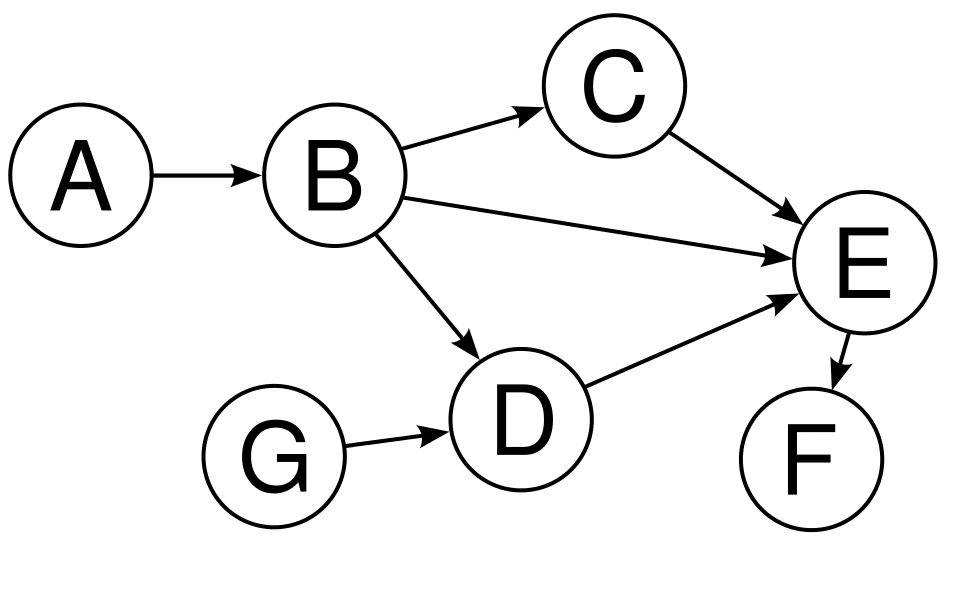

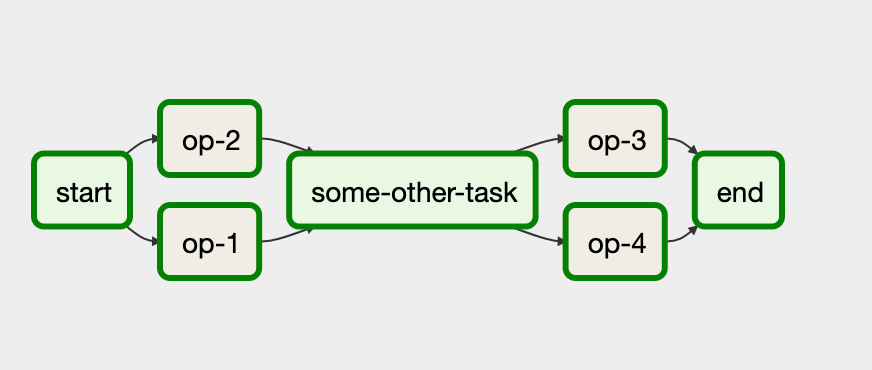

Read the documentation » Python API ClientĪirflow releases official Python API client that can be used to easily interact with Airflow REST API from Python code.Īirflow releases official Go API client that can be used to easily interact with Airflow REST API from Go code. Thanks to Kubernetes, we are not tied to a specific cloud provider. Because of this, dependencies are key to following data engineering best practices because they help you define flexible pipelines with atomic tasks. Each task is a node in the graph and dependencies are the directed edges that determine how to move through the graph. In Airflow, your pipelines are defined as Directed Acyclic Graphs (DAGs). You can extend and customize the image according to your requirements and use it inĪirflow has an official Helm Chart that will help you set up your own Airflow on a cloud/on-prem Kubernetes environment and leverage its scalable nature to support a large group of users. Dependencies are a powerful and popular Airflow feature. Microsoft Windows Remote Management (WinRM)Īirflow has an official Dockerfile and Docker image published in DockerHub as a convenience package for.Microsoft PowerShell Remoting Protocol (PSRP).Internet Message Access Protocol (IMAP).They are versioned and released independently of the Apache Airflow core. Providers packages include integrations with third party projects. Read the documentation » Providers packages celery.Apache Airflow Core, which includes webserver, scheduler, CLI and other components that are needed for minimal Airflow installation.celery.worker_concurrency: max number of task instances that a worker will process at a time if using CeleryExecutor.scheduler.max_threads: how many threads the scheduler process should use to use to schedule DAGs.core.max_active_runs_per_dag: maximum number of active DAG runs, per DAG.core.non_pooled_task_slot_count: number of task slots allocated to tasks not running in a pool.core.dag_concurrency: max number of tasks that can be running per DAG (across multiple DAG runs).core.parallelism: maximum number of tasks running across an entire Airflow installation.Options that are specified across an entire Airflow setup: task_concurrency: concurrency limit for the same task across multiple DAG runsĮxample: t1 = BaseOperator(pool='my_custom_pool', task_concurrency=12).Pools can be used to limit parallelism for only a subset of tasks pool: the pool to execute the task in.Options that can be specified on a per-operator basis: Below is an example of a workflow we use at Bluecore. You can easily construct tasks that fan-in and fan-out. What makes Airflow so useful is its ability to handle complex relationships between tasks. If you delete 'retries' and 'retrydelay' from the dagargs, you'll see that task set to failed when you try to initiate the DAG. Airflow UI DAG view DAG pipeline examples. # Allow a maximum of 10 tasks to be running across a max of 2 active DAG runsĭag = DAG('example2', concurrency=10, max_active_runs=2) When you set the 'retries' to a value, Airflow thinks that the Task would be retried in an other time. Defaults to core.max_active_runs_per_dag if not setĮxamples: # Only allow one run of this DAG to be running at any given timeĭag = DAG('my_dag_id', max_active_runs=1) The scheduler will not create new active DAG runs once this limit is hit. max_active_runs: maximum number of active runs for this DAG.Defaults to core.dag_concurrency if not set concurrency: the number of task instances allowed to run concurrently across all active runs of the DAG this is set on.Options that can be specified on a per-DAG basis:

Some can be set on a per-DAG or per-operator basis, but may also fall back to the setup-wide defaults when they are not specified. Here's an expanded list of configuration options that are available since Airflow v1.10.2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed